In a recent Cities essay, Milad Malekzadeh makes the case that planners should engage with off-the-shelf AI as curators rather than coders: professionals who ask the right questions of these tools, validate outputs, and integrate results into planning work. In reality, many planners are already using LLMs to help them research emerging trends, outline staff reports, summarize public comments, and draft policy documents. The question is no longer whether to adopt them but how to do so responsibly. And the answer starts with data you can trust.

One of Malekzadeh’s clearest warnings is about data. By default, LLMs are trained on static web content that likely does not include the critical spatial and demographic datasets needed to make reliable and actionable observations. An LLM asked about a town’s housing stock will answer with confidence, but the numbers behind that answer may be a scraped ACS table from several years ago, blended with a 2018 blog post and a realtor’s marketing page. The more time-sensitive or locally specific the question, the less reliable the generic model becomes.

Other recent scholarship sharpens the point. In the Journal of the American Planning Association, Sanchez, Brenman, and Ye identify bias, transparency, accountability, privacy, and misinformation as the core ethical concerns planners need to navigate, warning that models can quietly perpetuate existing biases and exclude marginalized communities from the decisions that most affect them. A Discover Cities study surveying planners working with generative AI found that the most-cited risks in practice are exactly what Malekzadeh predicts: errors, biases, and plausible-sounding incorrect content. Hallucinations don’t announce themselves in a staff report; a confident figure pulled from a chatbot can shape a rezoning vote before anyone has time to check it.

None of this is an argument for disengaging from AI. It is an argument for greater awareness about about the risks, especially making sure it is utilizing data you can stand behind. For planners, that means sources that are current, documented end-to-end, consistently defined across geographies, and accurately crosswalked. And often documentation matters as much as accuracy: a number you can defend in a public meeting needs a source, a date, and a validated methodology behind it. This is how the curator role Malekzadeh describes comes into focus: by trusting the inputs, the planner can use LLMs to investigate hard questions and generate insights the community can rely on for effective planning work.

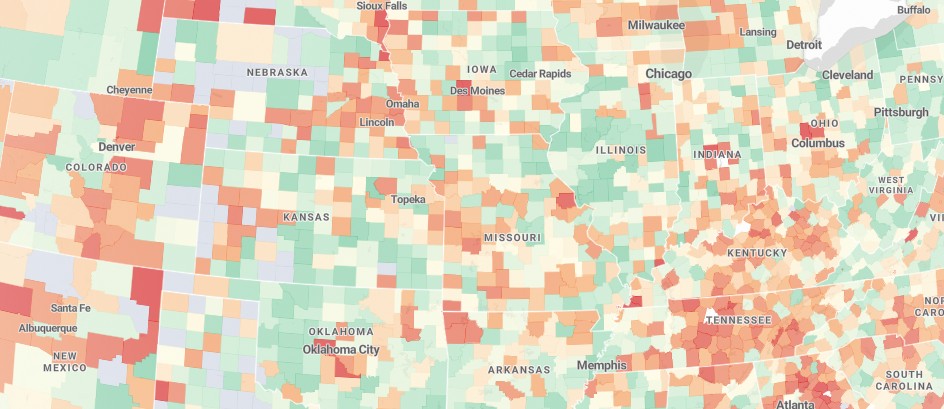

We built Housing Forecast to close the data gap and provide planners and other stakeholders a better, more trustworthy source of critical information. The database combines ACS, PUMS, BLS, HUD, FRED, and other demographic and market data for every U.S. city, town, and county into a single validated dataset with documented methodologies. Our platform offers planners (and their LLMs) a reliable data source to inform accurate and timely communication and policymaking in today’s rapidly changing housing discourse.